Xray to Cloud Migration: From On-Premises to Cloud with Precision

Overview Discover how a leading UK legal technology company successfully migrated from on-premises Jira + Xray Server to Jira Cloud, overcoming complex challenges and tight

Home » AI Center of Excellence » AI QA: Evaluation & Testing

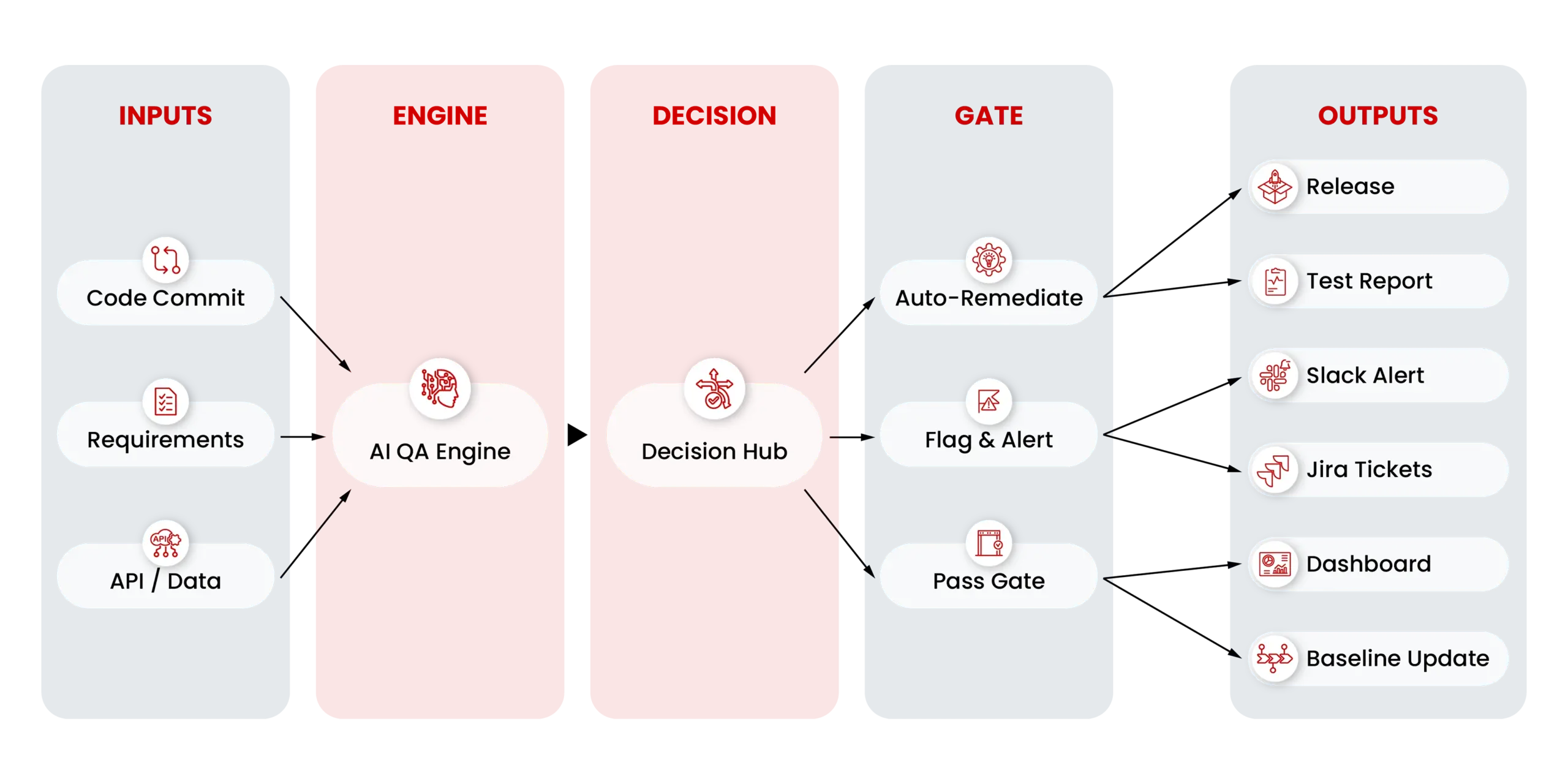

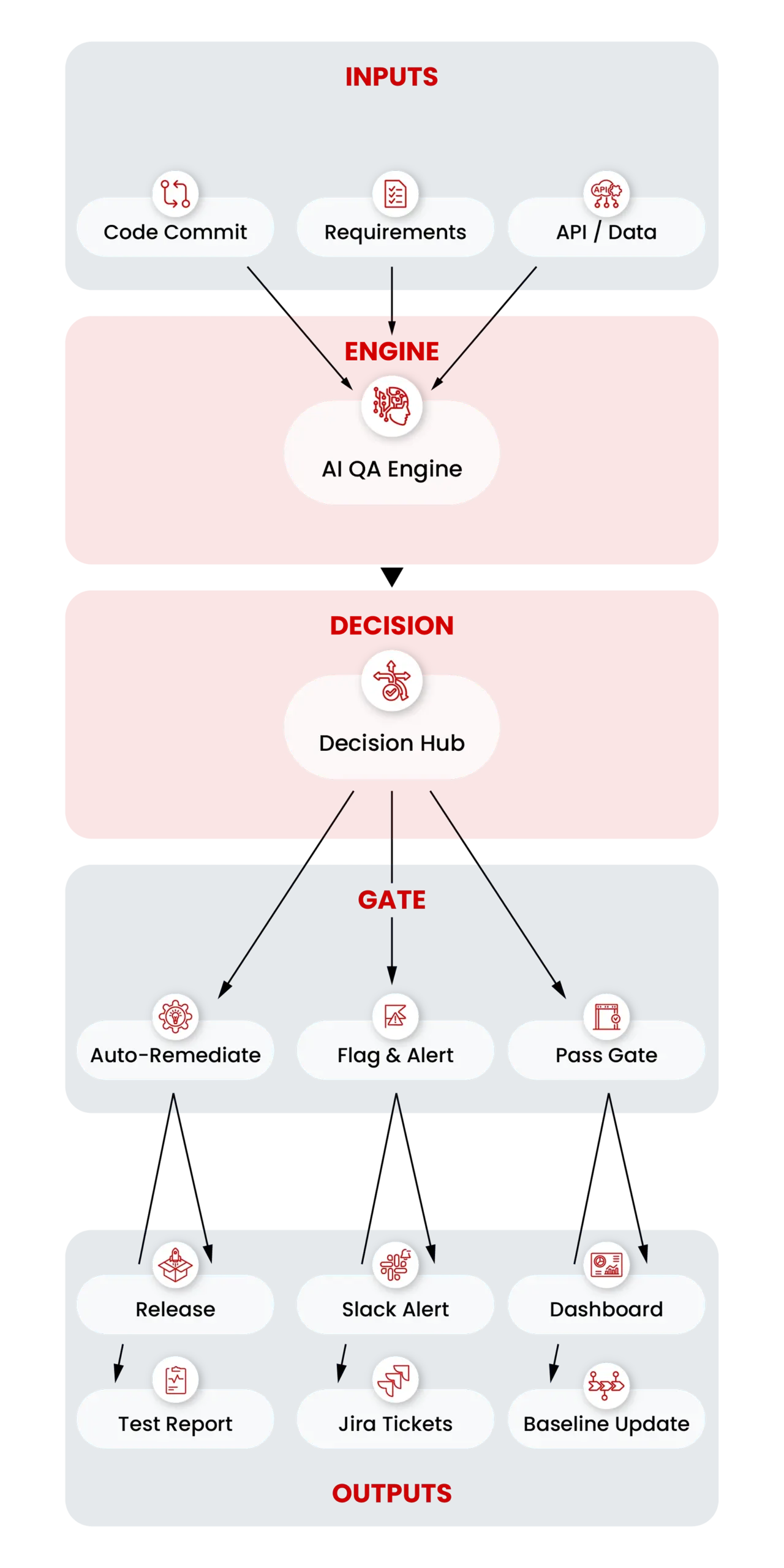

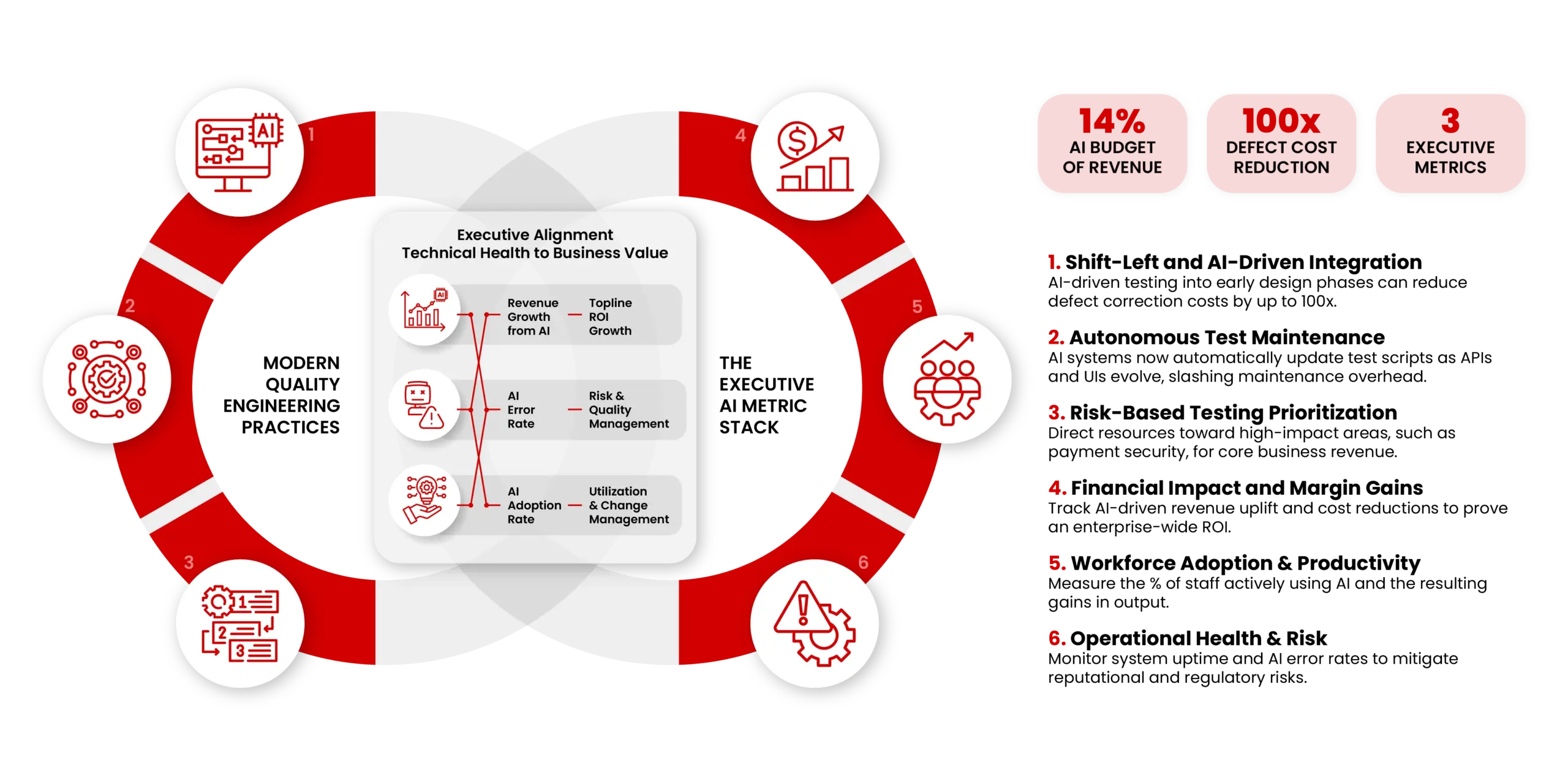

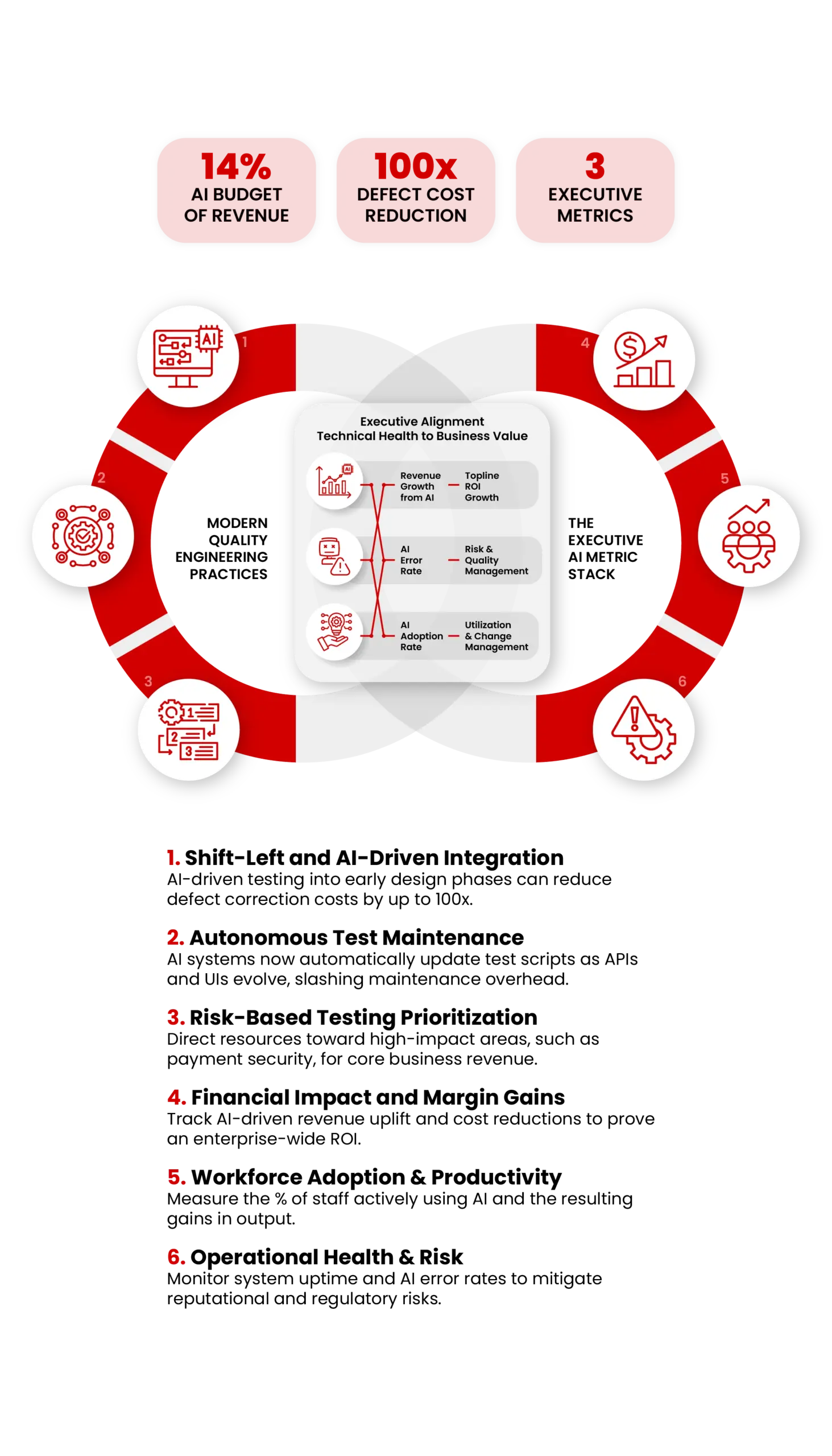

We evaluate and test AI systems (LLMs, RAG, agents, chatbots, and predictive ML) with measurable quality gates, so you can deploy with confidence.

Confident-sounding wrong answers that damage trust. Your AI sounds certain, but is completely fabricating.

Systematic unfairness that goes undetected until it becomes a crisis. Legal risk hiding in every output.

Performance degrades silently over time. What worked at launch slowly breaks without warning.

Measuring task accuracy, response consistency, hallucination detection, adversarial robustness, and agent behavior correctness.

Covering toxicity checks, bias testing, data privacy, security vulnerabilities, and governance readiness.

Testing latency, throughput, cost trade-offs, failure handling, and regression across versions.

Covering monitoring, release gates, incident response, and continuous evaluation pipelines for production AI systems.

1

Define use cases, risks, acceptance criteria, and map architecture.

2

Build test suite, golden dataset, scoring rubric, and baseline metrics.

3

Run tests, tune prompts/retrieval/policies, implement safeguards and regression.

4

Set release gates, automate evaluation, and monitor drift after launch.

Run structured evaluations for LLMs, RAG pipelines, and agents using benchmark suites, LLM-as-a-judge, and automated scoring.

Braintrust | Promptfoo | DeepEval | RAGAS

Build golden datasets, adversarial inputs, and business-focused scenarios to measure quality, regression, and robustness.

Synthetic data | Golden sets | Label Studio

Validate structure, correctness, faithfulness, and consistency with schema checks, rule-based controls, and semantic evaluation.

Schema validation | Faithfulness | LLM-as-a-judge

Assess context relevance, retrieval precision, chunking quality, citation support, and grounded response behavior.

Context precision | Recall | Grounding

Check bias, policy compliance, privacy leakage, unsafe outputs, and prompt injection resilience across critical workflows.

Guardrails | Policy tools | Red teaming

Connect traces, prompt versions, incidents, and performance metrics into dashboards that support ongoing quality improvement.

Langfuse | LangSmith | Arize Phoenix | Monitoring

Overview Discover how a leading UK legal technology company successfully migrated from on-premises Jira + Xray Server to Jira Cloud, overcoming complex challenges and tight

Challenge Our client, a leading banking group in Southeast Europe, faced the daunting task of creating a complex web application for loans that seamlessly integrated

The Challenge Software applications, particularly web applications, are in a constant state of evolution. This dynamism, while essential for innovation, can create significant hurdles for

Client Overview Our client is a prominent telecommunications holding company based in Asia, renowned as one of the largest in the industry globally. Established in

Hallucination detection rate

Faster deployment cycles with confidence

Let’s assess your current AI solution and define measurable quality gates.

By signing up for the waiting list now, you'll secure your spot for early access and claim these valuable benefits.