Apache Kafka is an open source streaming platform that is used for building real-time streaming data pipelines and streaming applications.

Configuration

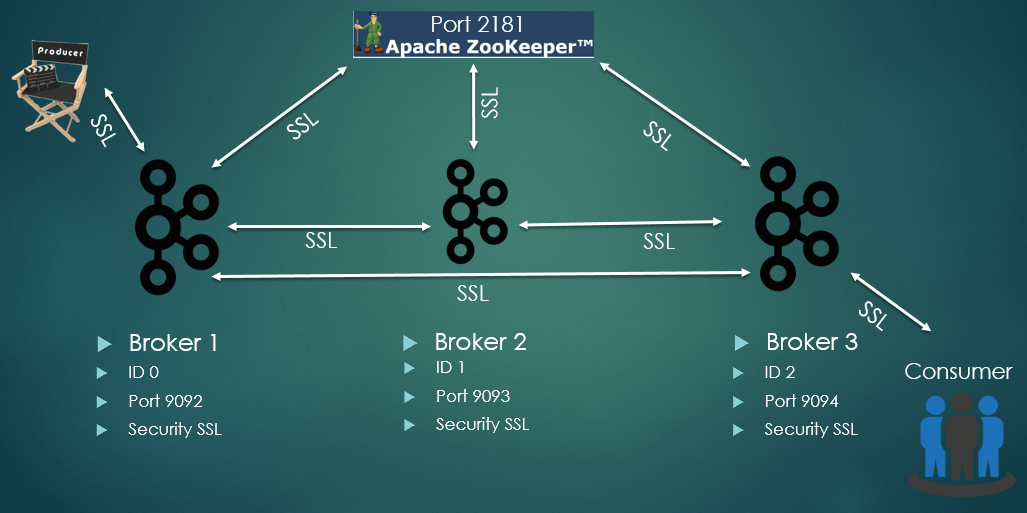

In the current cluster configuration, setup Apache Zookeeper and three Kafka brokers, one Producer and Consumer we are using SSL security between all the nodes.

Security

Java KeyStore is used to store the certificates for each broker in the cluster and pair of private/public key.

Zookeper SSL settings:

- client.secure=true

- ssl.keyStore.location=/path/to/ssl/server.keystore.jks

- ssl.keyStore.password=<<password>>

- ssl.trustStore.location=/path/to/ssl/server.truststore.jks

- ssl.trustStore.password=<<password>>

Broker SSL settings:

- client.auth=required

- enabled.protocols=TLSv1.2,TLSv1.1,TLSv1

- keystore.type=JKS

- truststore.type=JKS

- truststore.location=/path/to/ssl/server.truststore.jks

- truststore.password=<<password>>

- keystore.location=/path/to/ssl/server.keystore.jks

- keystore.password=<<password>>

- key.password=<<password>>

- inter.broker.protocol=SSL

In broker SSL configuration is important to set ssl.client.auth=required and security.inter.broker.protocol=SSL to not allow connections from clients without SSL parameters and enforce SSL communication between brokers in the cluster.

For security protocols we are using TLSv1, v1.1 and v1.2 as an option or this can be set only to the latest version to avoid security flaws.

In Producer and Consumer applications the same security parameters are set, to be able to produce messages or consume messages to and from topic.

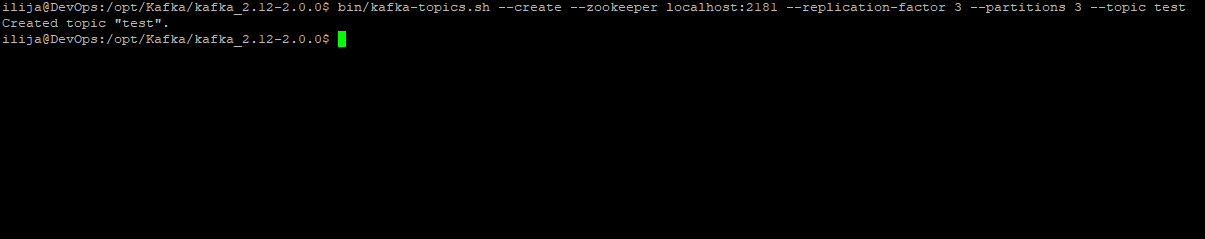

Creating a topic

To create a topic we are using kafka-topics.sh command line tool that is supplied with the standard Kafka release, in our case kafka_2.12-2.0.0.

Example:

Topic: test

Replication factor: 3

Partitions: 3

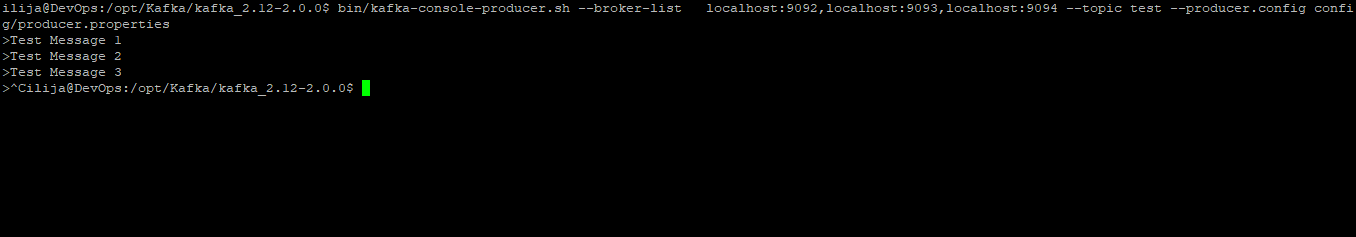

Creating messages

To create messages in topic we are using kafka-console-producer.sh command line tool.

Example:

Topic: test

Messages:

Test Message 1

Test Message 2

Test Message 3

After entering test messages Ctrl+C should be pressed to end message creating process.

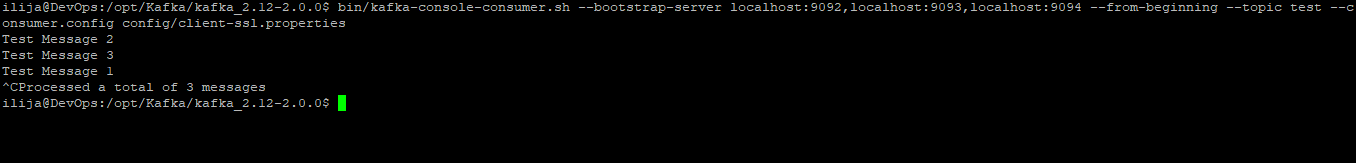

Consume messages

To read a message from a topic we are using kafka-console-consumer.sh command line tool, which will display all messages in STDOUT.

After executing the command will display current messages and will wait for new ones until Ctrl+C is pressed.

Overview

Apache Kafka messaging platform is used for large scale systems that are demanding fast data transfers between nodes and performances are in the focus of the business.