Artificial Intelligence is everywhere in the enterprise conversation right now.

Leadership and organizational teams are discussing it. Departments are experimenting with AI technologies. New pilots are launching across functions. In many organizations, there is no shortage of enthusiasm, tools, or ideas. On paper, it looks like progress. And yet, when you look more closely to see the ROI, a different picture often appears.

Despite all the activity, many enterprises are still struggling to turn AI into something operationally meaningful. They may have copilots, internal experiments, or promising proofs of concept, but very little has actually changed in how the business runs. Workflows remain the same. Decisions are still manual. Teams are still operating around AI rather than with it embedded into the business.

This is the real challenge of enterprise AI adoption.

The issue is usually not a lack of interest. It is not even a lack of use cases. The problem is that many organizations are treating AI as a set of disconnected experiments rather than as an enterprise capability that needs structure, governance, and an operating model. In practice, AI adoption often stalls between excitement and execution.

If enterprises want AI to move beyond curiosity and into scalable operations, they need to focus less on isolated experimentation and more on how AI is validated, governed, integrated, and managed over time.

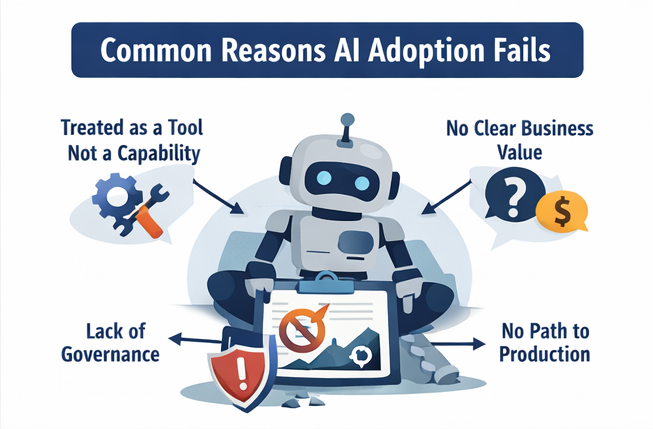

Why AI adoption fails in enterprises

AI adoption rarely fails because the technology itself is not powerful enough. It fails because the organization is not set up to move from experimentation to execution. That gap shows up in several ways.

1. AI is treated as a tool, not an operating capability

This is one of the most common problems. Many organizations adopt AI in the form of chatbots, copilots, assistants, or team-level experiments. These can be useful, but they often remain separate from the actual workflows that drive business performance. As a result, AI sits next to the business instead of inside it.

This creates the illusion of progress. Teams are busy. Tools are being tested. There may even be early wins. But unless AI changes how work is done, how decisions are made, or how processes operate, the organization has not truly operationalized AI. The distinction between “using AI” and integrating AI into the business is central to mature adoption.

2. Organizations jump into AI use and pilots without a clear value case

Another reason adoption fails is that AI initiatives begin with the technology rather than the business problem. A team wants to test a model. A vendor showcases a capability. Someone proposes a pilot because it sounds innovative. But the organization has not clearly defined the operational problem, the expected business impact, or what success would actually change. When that happens, even a technically successful pilot may go nowhere. Leadership cannot scale what has not been tied to meaningful business value.

3. Governance comes too late

In many enterprises, governance is treated as something that can be addressed after the pilot proves successful. That is usually a mistake. Enterprise AI cannot scale without confidence. And confidence depends on governance.

If there are no clear standards around data, accountability, validation, security, observability, and oversight, AI remains difficult to trust. The initiative may continue in a sandbox, but it struggles to move into real operations. Mature enterprise adoption increasingly depends on governance, auditability, role-based controls, and observability, not just model capability.

4. There is no structured path from pilot to production

This is where many AI initiatives lose momentum. A proof of concept may work. A pilot may show promise. Stakeholders may be interested. But the organization often lacks a clear methodology for what comes next.

Who owns the initiative after the pilot? What are the criteria for moving forward? How will the solution be monitored? How will it fit into workflows? What support model exists after launch?

Without a structured pilot-to-product lifecycle, pilots stay pilots. The movement from idea piloting to pilot-to-product transition and strong data/process governance is a defining feature of operational AI maturity.

5. AI adoption rate is fragmented across the business

In many enterprises, different teams explore AI independently. While that can generate ideas, it often creates inconsistency. Each group works differently. Standards vary. Tools vary. Success metrics vary. Governance varies. The result is not enterprise capability. It is distributed experimentation. This is one of the clearest signs that AI maturity is missing an organizing structure.

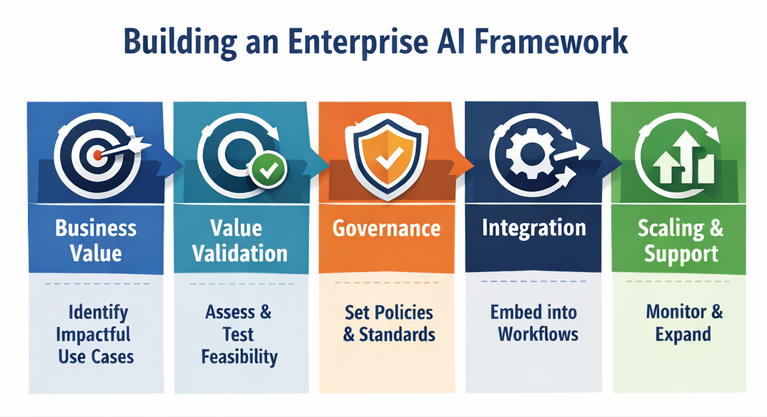

What enterprises should do instead

If the goal is to operationalize AI, the organization needs to approach adoption as a business transformation effort, not a series of isolated technology tests. That means building a more disciplined path from opportunity to execution.

Start with business value, not novelty

Every serious AI initiative should begin with a clear answer to a simple question: What meaningful business problem are we solving?

The strongest use cases are not chosen because they are trendy. They are chosen because they improve an important process, reduce friction, increase speed, lower cost, strengthen decision-making, or create measurable business value. This step matters because it creates alignment early. It also prevents the organization from investing in technically interesting experiments that are strategically weak.

Establish a real methodology for adoption

AI adoption needs structure. That includes clear stages such as value validation, technical feasibility, governed piloting, and transition into operations. Each stage should answer a different question, reduce a different kind of risk, and have clear decision points. Without that structure, organizations often confuse activity with progress. With it, AI becomes easier to evaluate, prioritize, and scale.

Build governance into the process from the start

Governance should not be introduced as a late-stage control. It should be part of the design from the beginning. That means defining standards for:

- data quality and data use

- ownership and accountability

- output validation

- human oversight

- security and access control

- auditability and traceability

- monitoring after deployment

These are not just compliance concerns. They are what make AI usable in the enterprise.

Design for operational fit early

Many AI initiatives stall because the organization proves that the technology works, but never designs how it will function inside real business operations. Operationalization requires more than a working model. It requires process fit, user adoption, support ownership, monitoring, performance measurement, and clarity about how AI will be sustained over time. In other words, production readiness is not just a technical milestone. It is an operational one.

Create a model for scaling, not just piloting

Enterprises do not need more disconnected pilots. They need a repeatable way to identify, validate, govern, and scale the right AI opportunities. That usually requires stronger cross-functional coordination, consistent standards, and a clear operating model for how AI moves through the organization. This is where experience matters most. It is one thing to help a company test AI. It is something else to help it build the structures that allow AI to become repeatable, trusted, and scalable.

From AI curiosity to scalable AI operations

The enterprises making real progress with AI are not necessarily the ones doing the most experimentation. They are the ones that understand that AI adoption becomes meaningful only when it is operationalized. That means moving beyond curiosity. Beyond scattered pilots. Beyond isolated wins. It means creating the conditions for AI to become part of how the business works: governed, validated, measurable, and integrated into real processes.

That shift requires more than technical capability. It requires a practical methodology, strong standards, and an understanding of how AI should move from exploration into enterprise execution. Organizations that get this right are not just adopting AI faster. They are adopting it more sustainably.

Conclusion

AI adoption fails in enterprises for a simple reason: too many organizations focus on the experiment and not enough on the system around it. They test tools without defining the business case. They launch pilots without governance. They prove technical potential without designing for operations. And they mistake AI activity for AI transformation.

The organizations that succeed take a different path. They treat AI as an enterprise capability. They build structure around it. They connect value, governance, piloting, and operational deployment. And they recognize that scalable AI does not come from enthusiasm alone. It comes from disciplined execution. That is what turns AI from a promising initiative into something the business can actually rely on. And that is where the right experience makes all the difference.

And this is exactly how we approach enterprise AI adoption. Through our AI Center of Excellence, we help organizations move beyond fragmented experimentation by creating the structure, governance, and operational path required to turn AI into a scalable business capability.